Introduction

Artificial Intelligence (AI) is no longer a futuristic concept; it’s shaping how businesses operate today. From chatbots providing personalized customer support to predictive models guiding business decisions, AI has proven its value across industries. But here’s the catch—AI systems need to handle massive amounts of data, and they must be adaptable to growing demands. This is where cloud infrastructure steps in, providing the foundation for scalable, cost-effective AI solutions.

Let’s dive into why scalability is critical for AI and how cloud infrastructure is transforming the AI landscape.

Understanding Scalable AI Solutions

The ability of an AI solution to manage growth in data volume, model complexity, and user demand while maintaining performance, efficiency, and cost-effectiveness.

- Challenges in Scaling AI Solutions:

- Processing Power: Increasing data and complexity require high-performance computing, which can be costly and hard to manage.

- Storage: Scalable, secure storage is needed to handle large datasets without performance issues.

- Latency: Real-time applications demand fast data processing and responses, often challenging to achieve.

The Need for Scalable AI Solutions

As businesses generate and process vast amounts of data, traditional on-premises systems often struggle to keep up with the computational requirements of AI applications. Scalable AI solutions are essential to:

- Manage Large Datasets: AI models require extensive data for training and inference. Scalable infrastructure ensures efficient storage and processing of these datasets.

- Adapt to Growing Demands: As AI applications evolve, computational needs can fluctuate. Scalable solutions allow for dynamic resource allocation, maintaining performance without over-provisioning.

- Optimize Costs: Scaling resources based on demand helps in managing operational expenses effectively.

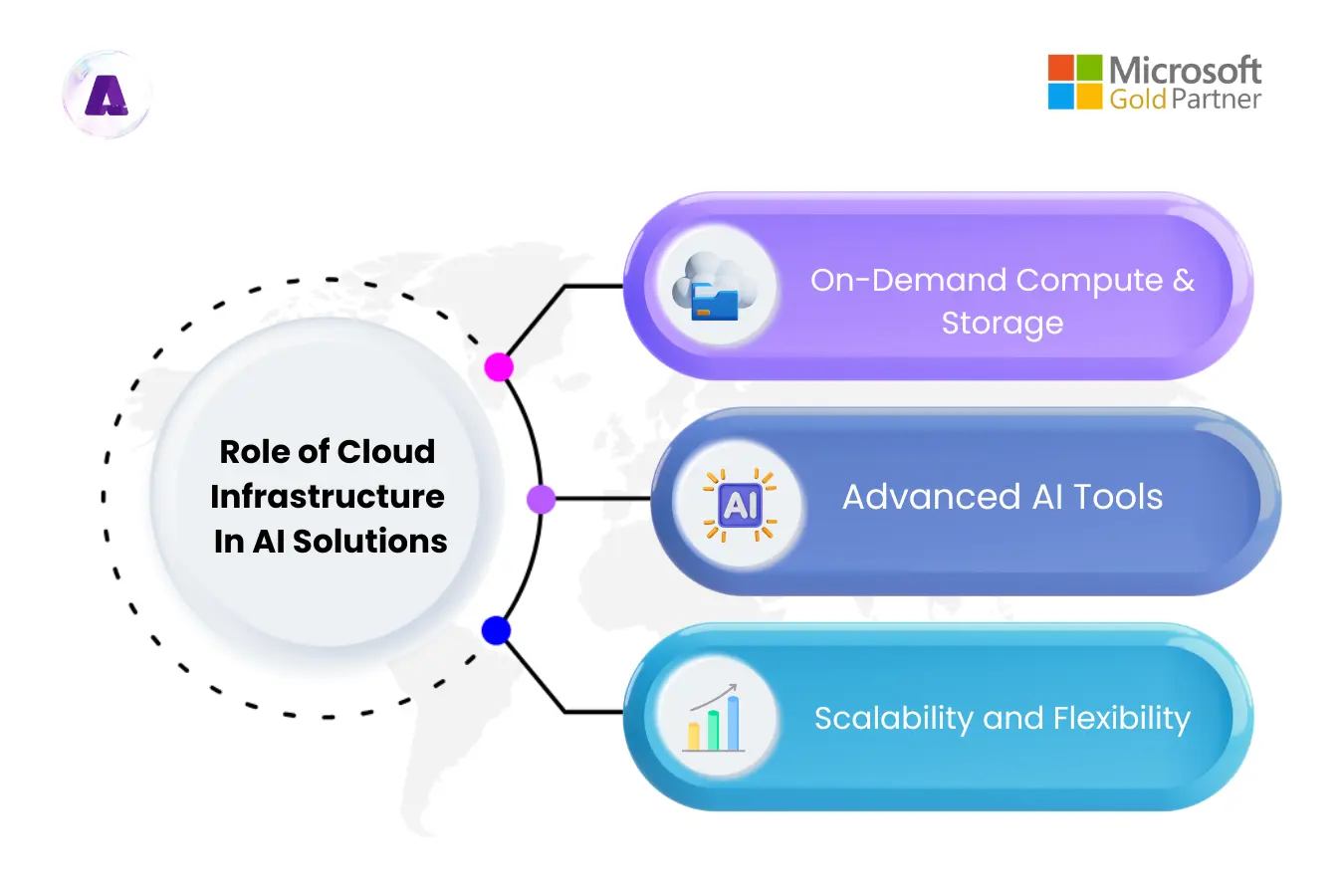

Role of Cloud Infrastructure in AI Solutions

Cloud platforms provide the necessary resources and services to build, deploy, and manage AI solutions efficiently:

- On-Demand Compute and Storage: Cloud services offer elastic compute power and storage, enabling businesses to scale resources up or down as needed. For instance, Google Cloud‘s AI-optimized infrastructure supports high-intensity AI workloads, delivering global scale and performance.

- Advanced AI Tools: Cloud providers offer specialized AI services that simplify model development and deployment. AWS SageMaker, for example, provides a comprehensive suite of tools for building, training, and deploying machine learning models.

- Scalability and Flexibility: Cloud infrastructure supports the dynamic scaling of AI applications, accommodating varying workloads and ensuring consistent performance. AI-driven scalability optimizes cloud infrastructure by automating resource management based on real-time data. For example, Microsoft’s Azure OpenAI Service exemplifies scalability and flexibility in AI-driven cloud infrastructure. It allows businesses to dynamically scale AI applications by leveraging Azure’s autoscaling capabilities. For instance, companies using GPT models via Azure OpenAI can automate resource allocation based on real-time demand.

How to Build Scalable AI Solutions Using Cloud Infrastructure: Challenges & Best Practices

1. Harness Managed AI Services in the Cloud:

- Cloud platforms offer managed AI services like Amazon SageMaker, Google AI Platform, and Azure Machine Learning, simplifying AI workflows from data preparation to deployment.

- These services enable data scientists to focus on improving models while offloading infrastructure management.

- Best Practices:

- Utilize managed services to streamline data preprocessing, model training, and deployment.

- Activate auto-scaling to adjust resources based on demand and optimize costs dynamically.

2. Streamline Data Pipelines for Scalability:

- Efficient data management ensures seamless scalability. Cloud-based solutions like data lakes and serverless processing tools eliminate traditional data flow limitations.

- Real-time and batch processing capabilities ensure quick and efficient data handling.

- Best Practices:

- Use tools like AWS Glue or Google Dataflow for scalable, on-demand ETL processes.

- Design data pipelines to handle diverse workloads, ensuring consistent performance.

3. Leverage Auto-Scaling and Traffic Distribution:

- Auto-scaling adjusts resources dynamically, while traffic distribution through load balancing maintains system performance.

- These features prevent overloading and downtime during demand spikes.

- Best Practices:

- Configure auto-scaling based on resource utilization metrics like CPU and memory.

- Deploy load balancers to evenly distribute traffic and enhance reliability during peak periods.

4. Adopt Modular and Microservices Architectures:

- Breaking down AI solutions into modular components or microservices simplifies scaling and maintenance.

- Independent scaling of components like data processing or model inference boosts efficiency.

- Best Practices:

- Use container orchestration tools like Kubernetes or AWS Fargate for streamlined microservices management.

- Group related functionalities to enable targeted scaling and reduce dependencies.

5. Strengthen Security and Ensure Compliance:

- Protect sensitive AI data with built-in cloud security tools like encryption and Identity and Access Management (IAM).

- Adhering to compliance standards ensures data protection and avoids regulatory issues.

- Best Practices:

- Implement role-based access controls to restrict access to critical data and resources.

- Encrypt data in transit and at rest to maintain security throughout its lifecycle.

- Perform regular security audits to identify vulnerabilities and meet industry compliance, such as GDPR or HIPAA.

6. Track and Optimize Costs Effectively:

- AI workloads can strain budgets without careful cost management. Cloud pricing models like spot instances and reserved instances help reduce expenses.

- Cloud FinOps practices provide better visibility and control over resource spending.

- Best Practices:

- Set up budgets and alerts to monitor expenses and avoid unexpected costs.

- Use spot instances for flexible tasks and reserved instances for predictable workloads.

- Regularly analyze resource consumption to identify areas for optimization and cost savings.

Choose the Right Cloud Provider

Selecting the right cloud provider for your AI needs is crucial. Different platforms offer unique features, pricing models, and services that can impact scalability, performance, and cost. Partnering with the right provider ensures seamless integration and efficient scaling.

Best Practices:

- Evaluate providers based on your specific workload requirements, including performance, cost, and security needs.

- Consider working with a professional service provider like Aptly Technology, a Microsoft Certified Gold Partner, which helps you navigate the complexities of cloud infrastructure and AI, ensuring the right solutions for your business goals. Aptly’s extensive experience in cloud infrastructure and AI can guide you in optimizing your resources, making the most out of cloud services while enhancing the scalability and efficiency of your AI systems.

Real-World Example:

- Netflix’s AI Journey

Netflix is a prime example of leveraging scalable AI. Its recommendation engine analyzes viewer data to suggest what you might want to watch next. But during high-traffic events, such as the release of a new season of a popular series, their AI system must handle a surge in user activity.

Netflix uses AWS cloud infrastructure to scale its resources dynamically, ensuring uninterrupted service. AWS’s elastic computing capabilities allow Netflix to manage these spikes without over-provisioning resources (source).

- BMW Leverages Azure AI Solutions to Accelerate Vehicle Development

Before 2018, BMW Group’s development fleet depended on manual data transfers and on-premises data processing, which considerably slowed down vehicle development and prototype cycles.

To address this, the company developed a Mobile Data Recorder (MDR) solution, installing an IoT device in each development car to send data over a cellular connection to an Azure cloud platform, where Azure AI solutions enable efficient data analysis.

The MDR system and Copilot powered by Azure greatly improve data accessibility, accelerate prototyping and troubleshooting, and enhance overall development quality.

Conclusion

Building scalable AI solutions with cloud infrastructure isn’t just about keeping up with demand; it’s about future-proofing your business. By leveraging cloud computing’s flexibility, advanced tools, and cost-effective scaling, companies can unlock the full potential of AI. Cloud computing provides the resources needed to support the massive data processing and computational power required for AI, making it easier for businesses to scale their AI initiatives efficiently.

Whether you’re a startup or an enterprise, adopting scalable AI systems within the cloud will not only enhance performance but also give you a competitive edge in the ever-evolving digital landscape.

Are you ready to scale your AI journey?